Smart Manufacturing | Digital Twin

The era of Digital Twins has arrived. We had an opportunity to interview Dr. Rick James, currently the CEO of SimuTech Group, to share his thoughts about the Digital Twin technology, and multi-domain system simulation.

“Everyone’s talking about digital twin now,” said Dr. James. “It’s almost like an echo chamber and if you look at the Gartner Hype Cycle, digital twin is right at the peak.” A digital twin is a connected virtual replica of an in-service physical asset, in the form of an integrated multi-domain system simulation, that mirrors the life and experience of the asset.

Why is everyone talking about Digital Twin?

Because it lies at the convergence of economics and technology. The required computer software, hardware, and means to capture and transmit the data, all elements required for building digital twin models, are becoming more available and more affordable. At the same time, companies face the ever-present competition to be more efficient economically by minimizing opportunity costs.

These forces are now converging, making digital twin methodology viable. Engineering simulation product development methodologies have been used for decades to speed time to market, optimize design, and reduce costs – and the methodologies are now being embraced more broadly to optimize operations as well.

What is Digital Twin?

“No one has the same definition of a digital twin,” said Dr. James. A digital twin allows engineers to communicate with sensors on a product to gather information allowing monitoring capabilities and assessments in real-time. A key advantage of a digital twin is the ability to run continuous monitoring and diagnostics, allowing for key benefits like predictive maintenance. Digital twin can also help companies proactively plan for manufacturing processes and factory operations, minimizing unplanned downtime, and even predict equipment failure. Knowing when equipment needs to be replaced or maintained allows for more carefully planned operations.

The digital twin methodology is more useful for meeting economic goals than it is for specific technology end goals, especially for complex projects and systems. “Remote modeling of sensors has been around for forever and is really old news. A digital twin gives you far more complex reliability insight within complex systems, like you need with a wind turbine with lots of components and multiple physics driving reliability and performance.”

What are the core components of a Digital Twin?

A digital twin has several core components: sensors, data analytics and controls, a communication platform (e.g. cloud platform), and decision-making physics. A digital twin can compile a massive amount of sensor data across industries such as pattern recognition, fluid flow, motion, velocity, and displacement.

Digital Twin | Mineral Processing

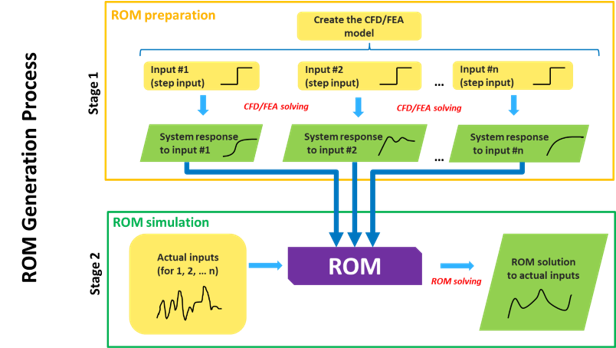

A key building block in digital twin methodology is reduced-order modeling (otherwise known as ROM), which reduces and simplifies large-scale full-fidelity models into essential inputs and outputs. “Essentially, ROM boils down the essence of a complex system into something simple – something that is typically a neutral format,” explained Dr. James.

A heat exchanger unit may be one component in a rather complex system. While the actual workings of the heat exchanger may be complex in and out of themselves, the only parameters provided by the ROM are the input and output temperatures.

No other engineering data, especially proprietary material properties and CAD geometry data, is provided. A systems-level model can integrate and utilize thousands of these ROMs at a time.

Ultimately, Dr. James believes that the standard for engineering and product development designs will include a ROM file.

Some equipment manufacturers already provide CAD drawings (CAD files) so that their equipment, at least the outer dimensions, can be placed in a customer’s CAD drawings, for example a plant layout. The next evolution in the process is providing a ROM file, further enhancing the current multi-domain system simulation capabilities.

Companies should hit the proverbial ground running and consider digital twin methodologies now, not later. “Digital twins are 205 years away from hitting their stride for true productivity, but it takes a while for technologies to work their way into companies so that they’re productive and valuable,” exclaimed Dr. James.